A Hardcoded Stub constrains test determinism and execution times

When testing interactions between interdependent applications we always want to minimise the scope of the System Under Test to ensure deterministic and rapid feedback. This is often accomplished by creating a Stub of the provider application – a lightweight implementation of the provider that supplies canned API responses on demand.

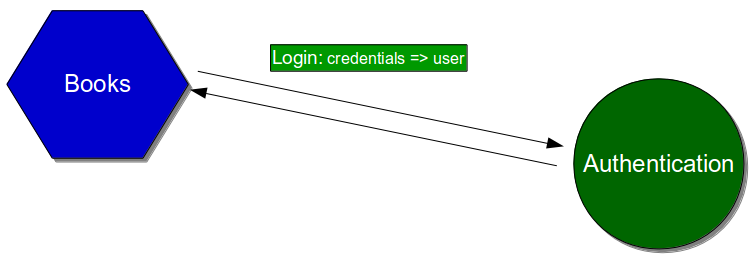

For example, consider an ecommerce website with a microservice architecture. The estate includes a customer-facing Books frontend that relies upon a backend Authentication service for user access controls.

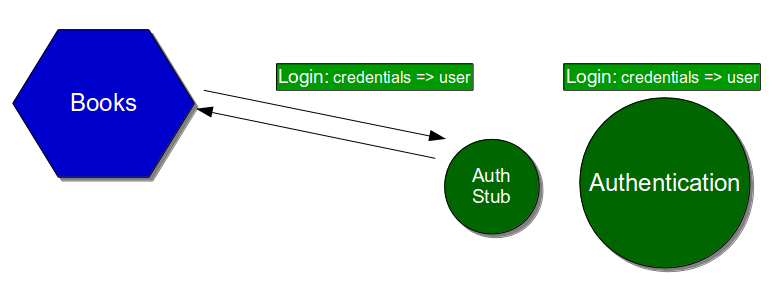

As the Authentication service makes remote calls to a third party, an Authentication Stub is supplied to Books for its automated acceptance testing and manual exploratory testing.

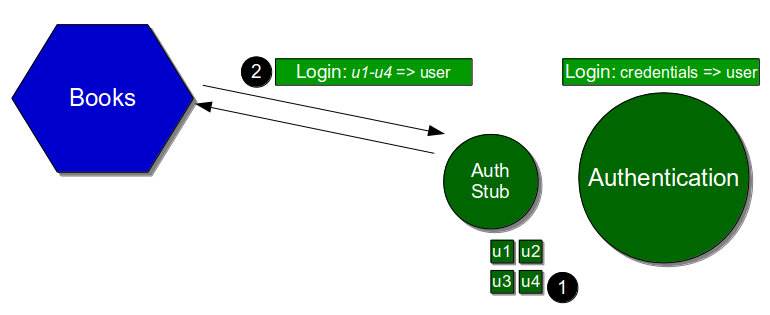

A common Stub implementation is a Hardcoded Stub, in which provider behaviour is defined at build time and controlled at run time by magic inputs. For the Authentication Stub that would mean a static pool of pre-authenticated users [1], accessed by magic username via the standard Authentication API [2].

While the Authentication Stub has the advantage of not requiring any test setup, the implicit Books dependence upon pre-defined Authentication behaviours will impair Books test determinism and execution times:

- Changes in the Authentication Stub can cause one to many Books tests to fail unexpectedly, increasing rework

- Adding/removing/updating Authentication behaviours requires a new Authentication Stub release, increasing feedback loops

- Concurrent test scenarios are constrained by the size of the Authentication Stub user pool, increasing test execution times

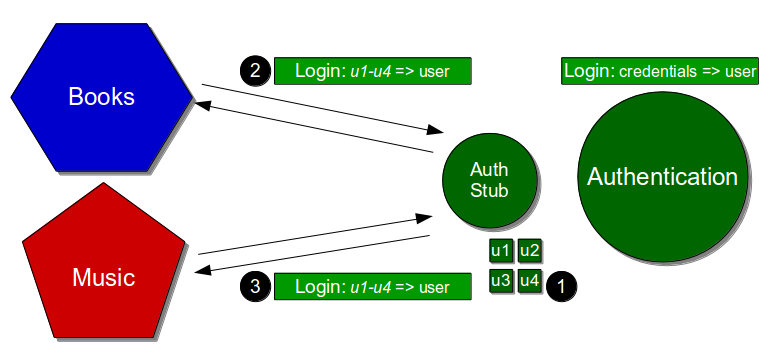

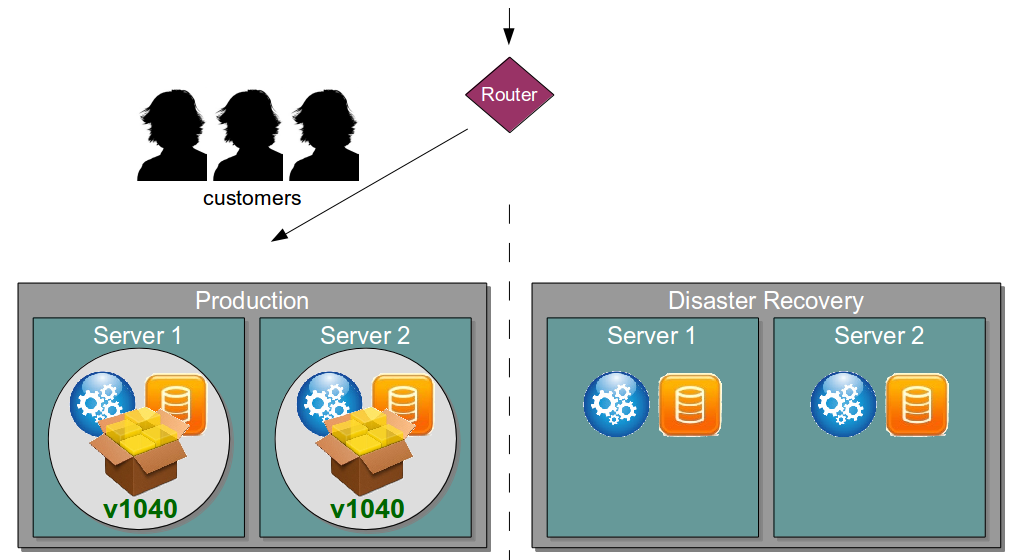

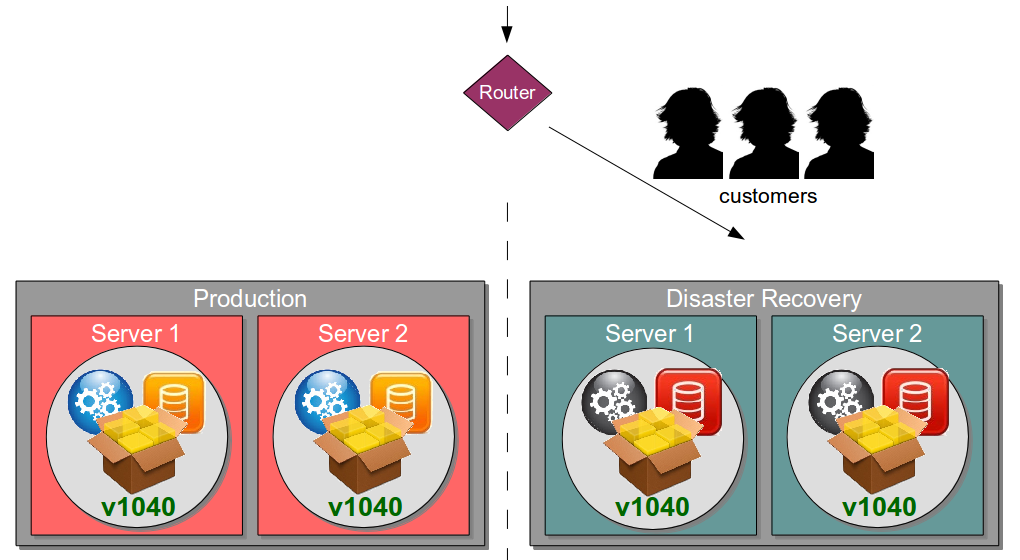

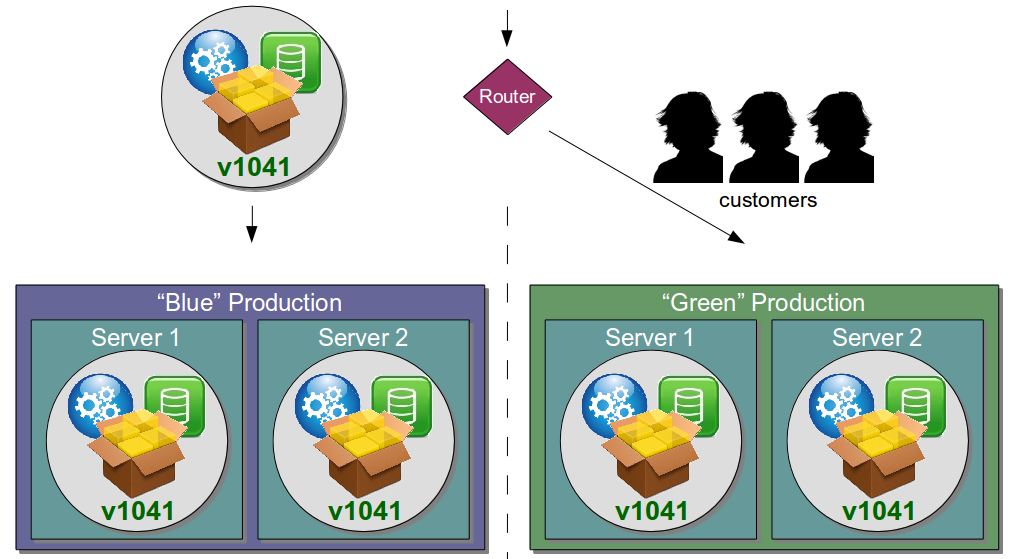

An inability to perform concurrent testing will have a significant impact upon lead times – parallel acceptance tests reduce build times, and parallel exploratory tests speed up tester feedback. This problem is exacerbated when multiple consumers rely on the same Hardcoded Stub, such as a Music frontend tested against the same Authentication Stub as the Books frontend. The same pool of pre-authenticated users [1] is offered to both consumers [2 and 3]

In this situation the simultaneous testing of Books and Music is bottlenecked by the pre-defined capacity of the Authentication Stub, despite their real-world independence. Test data management becomes a key issue, as testers will have to manually coordinate their use of the pre-authenticated users. A Books test could easily impact a Music test or vice versa – for example, a Books tester could accidentally lock out a user about to used by Music. Such problems can easily lead to wait times within the value stream and inflated lead times.

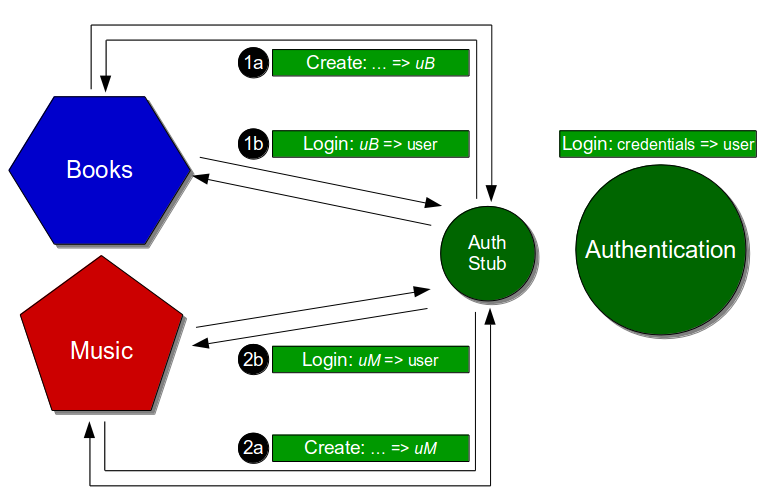

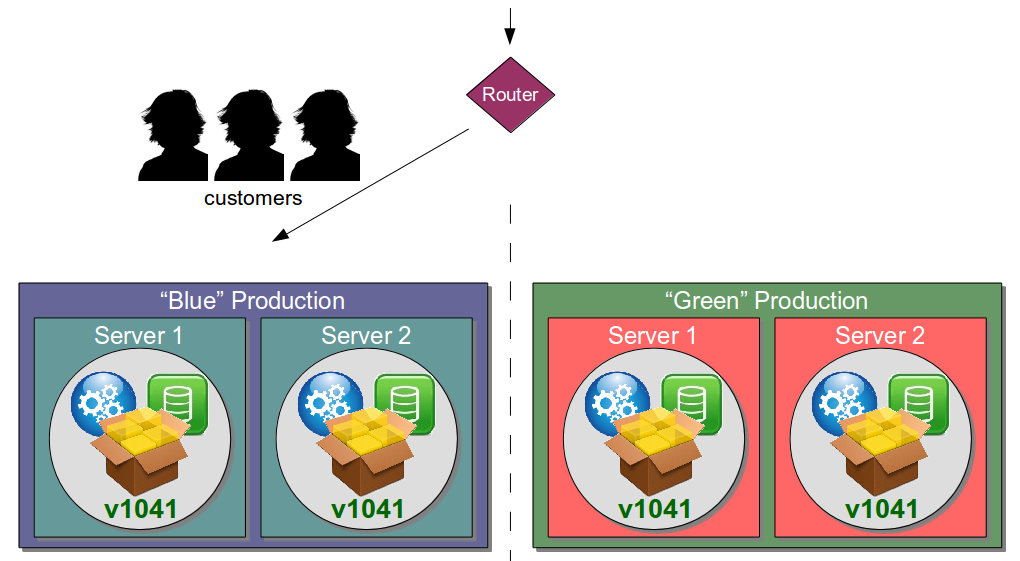

The root cause of these problems is the overly-contextual nature of a Hardcoded Stub. Rather than predicting test scenarios upfront and providing tightly controlled pathways through provider behaviours, a better approach is to use a Configurable Test Stub – a Configurable Test Double primed by different automated tests and/or exploratory testers to compose provider behaviours. This would mean an Authentication Stub with a private, test-only API able to create users in a desired authentication state and return their generated credentials [1a and 2a] before the standard Authenticatino API is used [1b and 2b].

By pushing responsibility for Authentication behaviours onto Books and Music, test data management is decentralised and tests become atomic. The Authentication Stub will have a much lower rate of change, Consumer Driven Contracts can be used to safeguard conversation integrity, and both Books and Music can parallelise their test suites to substantially reduce execution times.

A Hardcoded Stub may be an acceptable starting point for testing consumer/provider interactions, but it is unwieldy with a large test suite and unscalable with multiple consumers. A Configurable Test Stub will prevent nondeterministic test results from creeping into consumers and ensure fast feedback.